Real-Time QA: Testing Every Feature As Soon As It's Built

Why waiting until end of sprint to test is expensive, and how feature-by-feature testing changes everything

What Is QA, and Why Does It Exist?

QA stands for Quality Assurance. It’s the process that verifies a piece of software does what it’s supposed to do — without crashing, without producing incorrect results, and without unexpected behaviors.

In practice, QA is performed by a person (or a team) whose role is to test the software from the user’s perspective. They don’t read the code: they use the application. They click, fill in forms, trigger actions, provoke edge cases, and observe whether the result matches expectations.

QA covers several levels:

- Functional tests: does the feature do what was asked?

- Regression tests: do new features break existing ones?

- Edge case tests: what happens with empty data, oversized files, or special characters?

- Interface tests: is the display consistent across screens and browsers?

- Performance tests: does it remain fast under load?

Without QA, bugs go straight to production. Users discover them. Trust erodes. Fixing bugs in production costs far more than catching them before release.

QA is not a luxury reserved for large teams. It’s the safety net between “it compiles” and “it actually works.”

The problem isn’t the existence of QA. It’s when QA happens.

Developers Forget Fast

Monday morning. Thomas finishes implementing the “PDF Report Export” feature. He knows this code inside out: why he chose that PDF generator, which edge case he had to work around in table rendering, why pagination is handled server-side rather than client-side.

Monday afternoon. He moves on to the next feature: the notifications API.

Friday of the following week. QA comes back to him:

“Thomas, the PDF export crashes when a report has more than 50 pages.”

Thomas opens the file. He re-reads the code. He searches. He re-reads again.

20 minutes later:

“Ah right, it’s the timeout in the generator config. I set a default value — I thought it would be enough.”

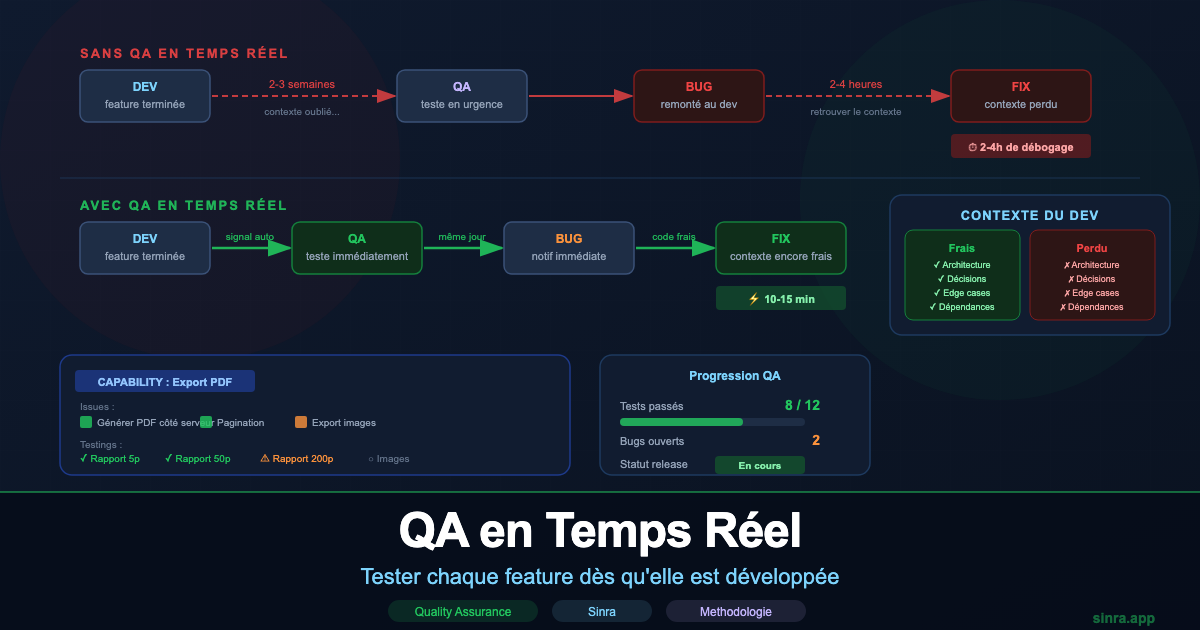

That bug would have taken 10 minutes to fix on Monday. It takes 2 hours on Friday: finding the context, rebuilding the original reasoning, identifying the root cause, checking the fix doesn’t break anything else.

The problem isn’t Thomas. The problem is the delay between development and testing.

What Cognitive Science Tells Us

Developers work in a state of deep cognitive load. When Thomas writes his PDF feature, his working memory holds:

- The feature architecture

- The technical constraints identified

- The design decisions made and why

- The edge cases anticipated, and those intentionally left out

- The dependencies with other parts of the codebase

This working memory is volatile. It starts degrading the moment Thomas moves on to something else. Within 24 hours, he’s forgotten 60% of the context. Within a week, he has to reconstruct everything from the code.

The ideal window to fix a bug is immediately after development.

The developer doesn’t need to reconstruct context. They see the bug, they know exactly why it happens, they fix it in minutes. Not hours.

The Illusion of “Test Everything at the End”

Most teams operate like this:

- Developers code for 2-3 weeks

- QA receives all features to test at end of sprint

- QA tests under pressure before the release

- Bugs come back to developers… who moved on long ago

This pattern creates three major problems.

Problem 1: Context Is Lost

When a developer receives a bug report two weeks after writing the code, they start from zero. They have to re-read the code, rediscover their own design decisions, reconstruct the execution path. What would have been obvious on the day becomes a full debugging exercise.

Real cost: a 15-minute bug becomes a 2-4 hour bug.

Problem 2: Bugs Block the Release

When all bugs surface at the same time at end of sprint, the team faces a crisis: fix fast, or ship with known bugs. Decisions made in that context are rarely the best ones.

Real cost: delayed releases, scope cut in a rush, technical debt accepted by default.

Problem 3: Bugs Compound

An untested feature influences subsequent features. If feature A has an architectural flaw that nobody sees, features B, C, and D may be built on top of it. When the problem is detected at end of sprint, sometimes several features need to be revisited at once.

Real cost: snowball effect on the entire release quality.

Few Methodologies Actually Integrate This

Real-time QA, feature by feature, is a logical given. Yet very few methodologies prescribe it clearly in their process.

Waterfall: QA Relegated to Final Phase

The waterfall model is the perfect counter-example. The full cycle follows a strict order: analysis, design, development, test, deployment. QA only intervenes after all development is complete.

In a six-month Waterfall project, the test phase begins in month five. Developers have been writing code for weeks or months. Context is lost. Discovered bugs require lengthy investigations. And if a bug reveals an architectural flaw, it’s often too late to fix it properly.

Scrum: Theory Versus Practice

Scrum prescribes that each sprint must produce a potentially shippable increment. In theory, this implies QA tests during the sprint, not after. In practice, most Scrum teams reproduce the same antipattern: developers code the first two weeks, QA tests the last week.

Scrum’s “Definition of Done” is supposed to include testing. But in many teams, “Done” means “developed and waiting for QA,” not “developed, tested, and validated.”

Result: QA remains a bottleneck at the end of each sprint, even in teams that call themselves agile.

Kanban: Continuous Flow But No Strong Signal

Kanban with its “Ready to Test” column can facilitate real-time QA if the team disciplines its process. A completed feature moves immediately into the QA column. But Kanban doesn’t explicitly prescribe that the developer stays available for immediate fixes. Coordination remains informal.

XP (Extreme Programming): The Closest

Extreme Programming is probably the methodology that comes closest to real-time QA, with its Test-Driven Development (TDD) and continuous integration practices. Automated tests are written before the code. Validation is permanent.

But XP focuses on automated tests, not human functional QA. Verifying that a feature matches actual user expectations remains a blind spot. And XP demands a level of technical discipline and skill that is rarely achieved in practice.

SAFe and Scaling Frameworks: More Process, Same Antipattern

SAFe (Scaled Agile Framework) adds layers of planning and coordination, but the core problem remains: test phases are often concentrated at the end of the Program Increment (PI). The framework’s complexity masks the same underlying antipattern.

The Flaws of QA in Spreadsheets

When teams try to better organize their QA, they often turn to Excel, Google Sheets, or Notion. Understandably: these tools are familiar, flexible, and free.

But they create structural problems that make real-time QA even harder.

Problem 1: The Spreadsheet Is Disconnected from Development

An Excel file of test cases is linked to nothing. It doesn’t know when a feature is complete. It can’t notify QA that an issue has been marked “Done.” The signal that should trigger testing has to go through a human: a Slack message, an email, a standup meeting.

Result: QA doesn’t test in real time because QA doesn’t know when to test.

Problem 2: The Spreadsheet Doesn’t Track History

A spreadsheet shows the current state: Passed, Failed, Not Run. It doesn’t show:

- Who tested

- Exactly when

- In what context (code version, environment)

- Whether it’s a first test or a retest after a fix

When a bug reappears in production two months later, it’s impossible to reconstruct what was tested and how.

Problem 3: The Spreadsheet Creates Multiple Versions

Test_Cases_v1.xlsx, Test_Cases_v2_FINAL.xlsx, Test_Cases_v2_FINAL_revised.xlsx, Test_Cases_v3_THOMAS.xlsx.

Which one is current? Which version is the reference? Teams waste time reconciling files instead of testing.

Problem 4: The Spreadsheet Isolates QA from the Rest of the Team

Developers work in their issue tracking tool. QA works in their spreadsheet. These two worlds don’t communicate automatically. A bug found in Excel must be manually copied into Jira or Linear. A fix validated in Jira doesn’t automatically update Excel.

Result: double entry, sync errors, and most importantly: the developer doesn’t see the bug at the right moment.

Problem 5: No Real-Time Global View

“Can we ship on Monday?”

With a spreadsheet, this question requires 30 to 60 minutes of work: open the file, count the passed tests, check Jira for bugs, compare against the feature list, mentally reconstruct the global state.

And the answer will still be approximate: “I think we’re good… unless I missed something.”

The Mechanics of Real-Time QA

For real-time QA to actually work, three conditions must be met.

Condition 1: An automatic signal when a feature is ready.

QA should not have to ask “is it ready?” They should be automatically notified when an issue is marked “Done.” Without this signal, QA stays in reactive mode and coordination remains informal.

Condition 2: Test cases linked to the capability, not in a separate file.

If test cases live in a disconnected spreadsheet, the link between “this capability is ready” and “here’s what to test” lives in someone’s head. It needs to live in the system.

Condition 3: The developer stays available for immediate fixes.

The cycle must be: developer finishes → QA tests → bug reported → developer fixes. This cycle must happen in hours, not days. This means a developer is not considered “free” until QA has validated their feature.

Sinra: Real-Time QA by Design

Sinra was built with this model as its foundational premise: testing must be continuous, linked to development, and visible in real time.

Testings Are Linked to Capabilities

In Sinra, testings (test cases) don’t live in a separate file. They’re attached directly to capabilities — the natural level for QA.

This is a deliberate choice. An issue is a development task: “Implement server-side PDF generation.” A QA test is a user behavior: “Export works with a 200-page report.” These two levels don’t overlap. QA thinks in behaviors, not technical tasks. The capability, which represents a coherent feature from the user’s perspective, is the right granularity.

When the capability “PDF Report Export” is created, QA defines their testings directly on it:

- Test with 5-page report

- Test with 50-page report

- Test with 200-page report

- Test with special characters in titles

- Test with images in the report

These testings are visible to the whole team: developers, QA, PO. When the capability’s issues are complete, QA executes its testings. The capability is only considered validated once all its testings pass.

QA Is Automatically Notified

When a developer marks an issue as complete, QA receives a notification: this issue is ready to test. No standup required, no Slack message, no manual checking.

The signal is immediate. QA can test while the developer still has that feature fresh in their head.

The Developer Sees the Bug Immediately

When QA marks a testing as “Failed” and creates an associated bug, the developer gets a notification. They see the bug while their feature is still fresh in their mind.

It’s no longer “Thomas, there’s a bug in the PDF export you wrote 3 weeks ago.” It’s “Thomas, there’s a bug in the PDF export you finished yesterday.”

The difference is radical. The fix takes 10 minutes instead of 2 hours.

Cycles Integrate Testing from the Start

In Sinra, a cycle (time-boxed work period) is not finished when development is done. It’s finished when testings are validated. The very definition of “done” includes QA.

current_testings (testings for the current cycle) is a first-class concept in Sinra. It’s not a column in a spreadsheet. It’s a native view that shows, in real time, how many testings have been executed, how many passed, how many failed, and how many remain.

The Release Doesn’t Progress Without QA

In Sinra, a release’s progress includes the state of testings. A capability cannot be marked “Done” in a release if its testings are not all validated. The system structurally prevents QA from being pushed to the end.

This is not a rule a team must impose through discipline. It’s the default behavior of the system.

What Changes in Practice

For the Developer

They receive QA feedback while still in the context of their feature. Fixes are fast, precise, and don’t require reconstructing weeks of mental context.

They’re no longer “released” to the next feature before their current one is validated. This creates a clear responsibility: a feature is done when it’s tested, not when it’s coded.

For QA

They know exactly what to test and when. Testings are prepared upfront, linked to features. They don’t have to ask if a feature is ready: the system tells them.

They test feature by feature, continuously, instead of testing everything in a rush at end of sprint. Their work is visible in real time. They’re no longer the invisible bottleneck.

For the Manager

The question “Can we ship?” has an answer in 30 seconds: X features are developed, Y testings passed, Z bugs still open. No Excel to count manually, no uncertainty.

Release decisions are made on data, not intuition.

For Product Quality

Bugs are caught early, fixed fast, and don’t compound. The snowball effect is stopped at the source. The release isn’t a race against the clock where QA rushes through everything: it’s the result of a continuous process.

The Core Principle

Testing a feature two weeks after it was built is testing at the worst possible moment: context is gone, the developer’s head is elsewhere, the bugs found delay the entire release.

Testing a feature 24 to 48 hours after it was built is testing at the best possible moment: code is fresh, the developer can fix fast, bugs are isolated and don’t affect the rest.

This principle should be at the heart of every development methodology. It’s rarely prescribed clearly. And the tools most teams use — spreadsheets and disconnected systems — make this way of working structurally difficult.

Sinra integrates real-time QA by design. Testings live with capabilities. Signals are automatic. Progress is visible, capability by capability. And developers fix their bugs while the code is still in their head.

That’s efficiency, not optimism.

Ready to integrate QA into your development flow? Try Sinra for free →

Discover how testings linked to capabilities and automatic notifications let your team test continuously, capability by capability, without ever waiting until end of sprint.

Ready to Transform Your Project Management?

Apply these insights with Sinra - the unified platform for modern teams.

Start Free Trial